Jetpack project is taking an aim at rewriting the extension system, learning from the past, responding to the feedback and thinking outside of the box. So when the Jetpack team approached L10n-Drivers and asked for support with building localization infrastructure for new addons, we decided to go the same way. 🙂

Common Pool

The first approach we’re presenting you is called Common Pool (yup, that’s not a very creative name) – it’s basic assumption is that most jetpacks are small applications with a minimal UI. And there are going to be tons of them. We already have tens of thousands of extensions, and Jetpack with its nice API and builder are going to make it much, much easier to write a good extension.

We also made some observations – There are common phrases, like “Cancel“, “Ignore“, “Log in” that are replicated over and over in almost every extension. It’s becoming hard to localize it, hard to keep it consistent and maintain the localization. On the other hand, extension authors are struggling to work with localizers as they need to iterate fast and string freeze phases are adding a lot of cost to the release process.

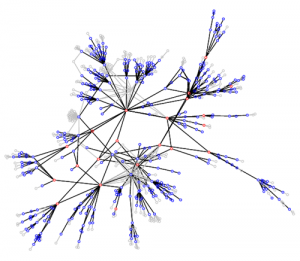

For that reasons we decided to build on a basic concept of entities shared across extensions, stored on a server, and pulled by the jetpack itself.

Shared entities are powerful. For a localizer it means that we can show you the list of mostly used entities and if you localize 20 of them, you localized 50, 100 or maybe 500 extensions. You can update a translation and have it translated the same way across all extensions. You can localize only one extension and your work will probably benefit other extensions as well. You can localize today, and tomorrow all users will get updated localizations. How cool is that? 🙂

For a add-on developer it also means more good. You can pick entities that are already localized into many languages and have you extension Just Work. You don’t have to worry about releases and localization because if you only make thing localizable, users will be able to pull localizations as they appear. It means less to think about.

Now, there are of course some trade offs. One of them is that not all translations of a word should be equal. For that reason we propose a concept of an exception. Actually, two of them.

First one is a per-addon exception which allows localizers to localize a given string differently for this particular addon. It’s important when the addon uses some specific vocabulary.

Second one is a per-case exception which allows localizers to divert from the common translation for a specific use case. Say, the use case in jetpack X, file main.js line 43 should be translated differently.

Library and the Web Service

So, that’s the concept, and it has been implemented as a library. Yahoo! Now, for such a solution to work, we knew we need a partner in crime. We need a web service that will support Common Pool and allow for addon localization. We knew we will want to merge it with AMO and the Builder, so django was a preferred technology, and one other requirement was to be able to support a localization storage model that is substantially different from the industry standard.

Fortunately, at the time when we conceptualized Common Pool, our friends from Transifex were reworking their storage model away from gettext-specific to much more generic database driven one. Well then – good time to see how generic it can be, right?

Together with Transifex team, mainly Dimitris Glezos and Seraphim Mellos we’ve been working on the Common Pool web service that can back up the library and become a localization web tool. Today, the first iteration of the tool is alive at – https://l10n.mozillalabs.com! The source code is GPL and available at transifex-commonpool bitbucket repository and that’s where we’re collecting bugs as well.

What’s next?

Now we have to prove that the concept in the field. We’re challenging the status quo, so it’s on us to validate it. We’re going to reach out to extension authors and localizers alike to get support and testing coverage and if we that it works, we’ll include common pool library into Addon SDK 1.0. It’s up to you to help us evaluate it!

Then, it’ll be time to complement Common Pool with another architecture, that does not solve the simple cases that easily, and require much more active work from the developer, but will provide better level of details for both parties. The solution we want to offer is L20n.

Bundle pack ahead

Together, Common Pool and L20n should provide everything that localizers and developers may need pushing the limits and stretching what’s possible with the localization. We think of a Common Pool as a great generic, easy solution for most common strings, while L20n complements it with more sophisticated, more powerful approach for the developers whose extension reached the level when they want it to be localized with more attention and where the UI requirements are higher.

Let me know what you think – I know it’s early, I gave many talks about those technologies to our Mozilla communities, but I realize that it’s hard to evaluate without actual code in hand, so now is the time for feedback, feedback, and even more feedback 🙂

In the next blog post I’ll explain the steps to create your first common-pool driven extension.